Seems like it was a couple of months ago, we were excited about fiber optic cable that twisted light to carry data at 1.6 Tbps per strand. Now a Petabit network is the new benchmark. U.K. and Japanese researchers mashed up software-defined networking (SDN) and multicore fiber to produce the first Petabit pipe according to Kevin Fitchard at GigaOM. A Petabit is one quadrillion (1,000,000,000,000,000 or 1015) bytes binary digits or one thousand Terabits.

Seems like it was a couple of months ago, we were excited about fiber optic cable that twisted light to carry data at 1.6 Tbps per strand. Now a Petabit network is the new benchmark. U.K. and Japanese researchers mashed up software-defined networking (SDN) and multicore fiber to produce the first Petabit pipe according to Kevin Fitchard at GigaOM. A Petabit is one quadrillion (1,000,000,000,000,000 or 1015) bytes binary digits or one thousand Terabits.

Petabit network uses multicore fibers

The researchers mashed up multicore fibers and SDN to makes very high-speed networks programmable. GigaOM speculates this will allow carriers to adjust the network capacity and latency to meet the needs of traffic traveling over their networks. First, GigaOM explains that the fiber is unlike today’s single strands of glass, or cores, that carry a single beam of light down the fiber. Multicore fiber is exactly what its name implies: multiple cores each carrying a single core’s worth of capacity over the same link. Professor Dimitra Simeonidou at the University of Bristol called current single-core fiber a capacity bottleneck.

The researchers mashed up multicore fibers and SDN to makes very high-speed networks programmable. GigaOM speculates this will allow carriers to adjust the network capacity and latency to meet the needs of traffic traveling over their networks. First, GigaOM explains that the fiber is unlike today’s single strands of glass, or cores, that carry a single beam of light down the fiber. Multicore fiber is exactly what its name implies: multiple cores each carrying a single core’s worth of capacity over the same link. Professor Dimitra Simeonidou at the University of Bristol called current single-core fiber a capacity bottleneck.

Space Division Multiplexed

The multicore group, led by NICT and NTT in Japan which built a 450 km (280 miles) section of fiber optics using 12 cores in two rings capable of transmitting 409 Tbps in either direction. That’s 818 Tbps in total. Which is within spitting distance of seemingly mythical Petabit speeds according to GigaOM. The MCF research relies on Space Division Multiplexed (SDM) provided by the multicore fibers.

In order to control the massive bandwidth, a team from the High Performance Networks Group at the University of Bristol created an OpenFlow software-based control element to manage those enormous capacities. The Brits implemented an interface that dynamically configures the network nodes so that it can more effectively deal with application-specific traffic requirements such as bandwidth and Quality of Transport.

In order to control the massive bandwidth, a team from the High Performance Networks Group at the University of Bristol created an OpenFlow software-based control element to manage those enormous capacities. The Brits implemented an interface that dynamically configures the network nodes so that it can more effectively deal with application-specific traffic requirements such as bandwidth and Quality of Transport.

According to the researchers, this was the first time SDN was used on a multicore network. The University of Bristol presser announcing the new technology says this technology will overcome critical capacity barriers, which threaten the evolution of the Internet.

rb-

OK, so that really – really – really fast. We also know from a 2011 New Scientist article that the total capacity of one of the world’s busiest routes, between New York and Washington DC, is only a few Terabits per second. With bandwidth-hungry applications like cloud computing, social media, and video-streaming continuously growing it forces network planners at firms like AT&T (T), Verizon (VZ), and the NSA to find new ways to grow their capacity.

Comcast (CMCSA) just finished a 1 Tbps network field trial on a production network between Ashburn, VA, and Charlotte, NC. Most likely the first place Pbps networking will be used is in the mega-data centers of the likes of Google (GOOG), Facebook (FB), or Microsoft (MSFT).

Related articles

Ralph Bach has been in IT long enough to know better and has blogged from his Bach Seat about IT, careers, and anything else that catches his attention since 2005. You can follow him on LinkedIn, Facebook, and Twitter. Email the Bach Seat here.

It’s not a good time for tech in schools. The security woes at school are not limited to the iPad debacle at

It’s not a good time for tech in schools. The security woes at school are not limited to the iPad debacle at

Keith Krueger, the CEO for the

Keith Krueger, the CEO for the

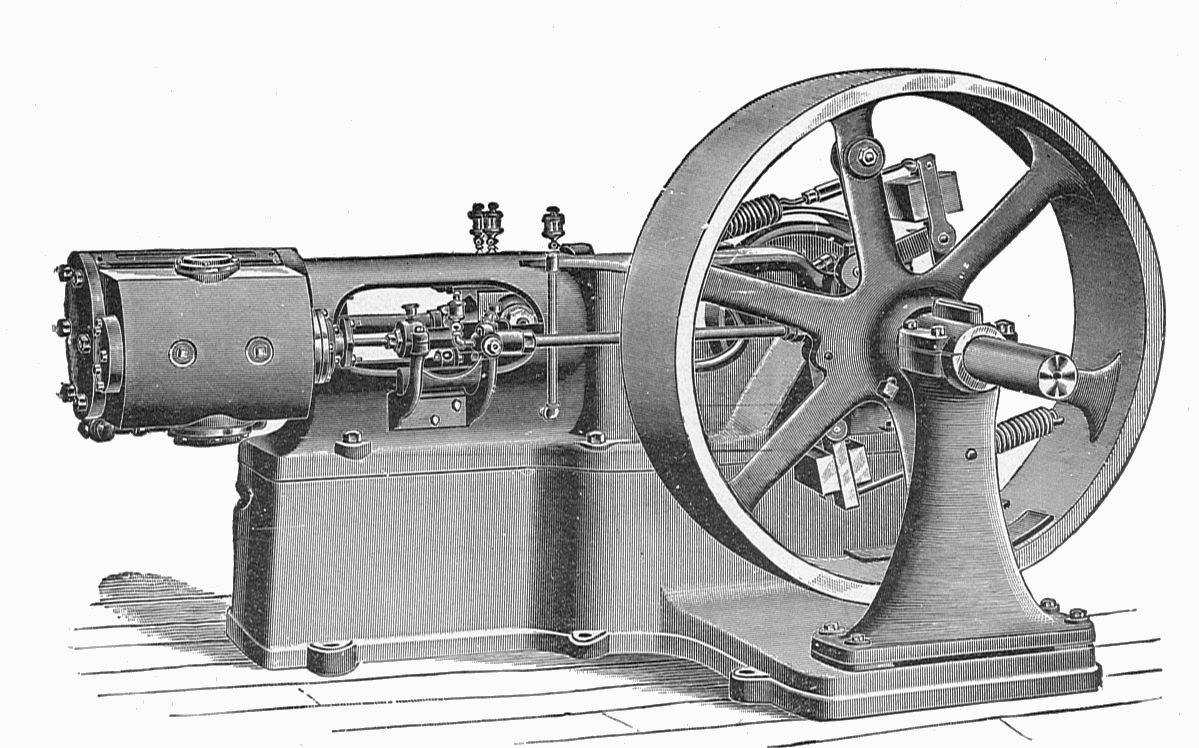

I recall as a newbie techie the first time I had to be in on Sunday morning to work with the site engineer to crank up the 100 HP Cummins standby generator. The firm ran the monthly test to make sure the critical systems stayed up. The generator was enclosed in a secure room that contained the heat and noise. The exhaust was vented out. One of my regular jobs was to kick the standby 55-gallon drum of diesel with the hand pump on it to make sure there was fuel available for the generator.

I recall as a newbie techie the first time I had to be in on Sunday morning to work with the site engineer to crank up the 100 HP Cummins standby generator. The firm ran the monthly test to make sure the critical systems stayed up. The generator was enclosed in a secure room that contained the heat and noise. The exhaust was vented out. One of my regular jobs was to kick the standby 55-gallon drum of diesel with the hand pump on it to make sure there was fuel available for the generator.